As AI agents become more integrated into our terminal and IDEs, the boundary between "Private Code" and "Public AI Cloud" is disappearing. In March 2026, "Prompt Leakage" has become the #1 cause of accidental data exposure in tech startups.

Why 'Standard' Privacy Settings aren't enough

Many developers rely on "Temporary Chat" modes. However, from a networking perspective, the moment you hit "Send," your data is transmitted. If your prompt contains:

- Hardcoded API Keys: (OpenAI, AWS, Stripe)

- Personally Identifiable Information (PII): (User emails, phone numbers, physical addresses)

- Internal Infrastructure Data: (Database URLs, internal IP addresses)

You are trusting a third party to manage your most sensitive assets.

4 Levels of Prompt Sanitization

To work safely with models like GPT-5.4 Thinking or Claude 4.6 Opus, you should follow this hierarchy of security:

1. The Redaction Layer (Mandatory)

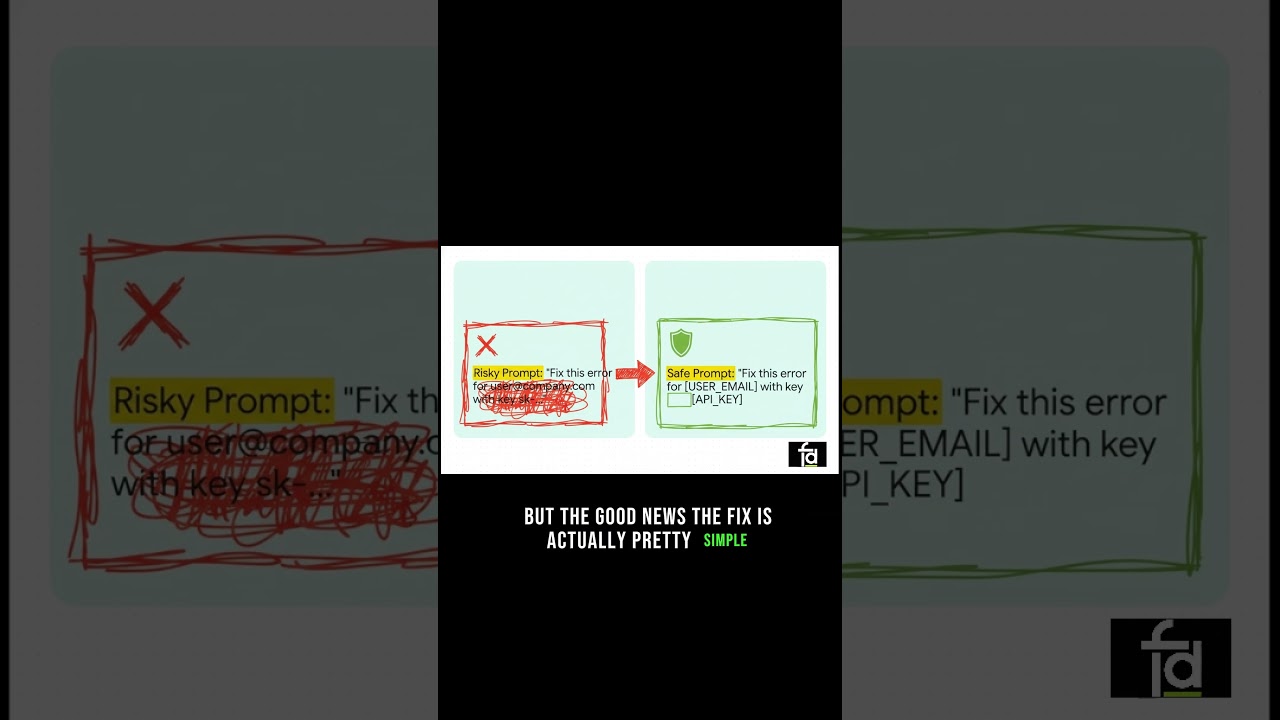

Before pasting, replace all unique identifiers with generic tags.

- Instead of:

error at user@company.com - Use:

error at [USER_EMAIL]This preserves the logic for the AI while removing the sensitive value.

2. The Context Pruning Layer

Only send the relevant code snippet. Don't paste the whole .env file just to fix one function. Modern models have 1M context windows, but that doesn't mean you should use them for secrets.

3. The Local-Only Verification

Use a tool that runs 100% in your browser memory. If the tool has a backend, you are just moving the risk from OpenAI to a smaller, potentially less secure tool provider.

4. CSP Hardening

For senior devs, ensure the tools you use have a strict Content Security Policy (CSP) that prevents them from "phone-homing" your data back to a server.

Technical Checklist for 2026 AI Compliance

| Data Type | Risk Level | Recommended Action |

|---|---|---|

API Keys (sk-...) | 🔴 Critical | Auto-Redact locally |

| JWT Tokens | 🔴 Critical | Inspect locally, never share |

| User Emails | 🟡 High | Replace with placeholders |

| Database Schemas | 🟢 Low | Safe to share if keys are removed |

Conclusion: Engineering for Sovereignty

In the race for AI velocity, don't sacrifice your data sovereignty. By sanitizing your inputs locally, you can use the world's most powerful reasoning models without ever fearing a security audit.

👉 Sanitize Your Prompt Locally Before Sending to LLM